From constructing a confidence interval for a point predictor to hypothesis testing, statistics can be a complex discipline to start to unravel. Luckily, this guide will help you start to understand the broad field of data analysis by walking you through the basics of its origins and composition.

What is Statistics?

In order to begin to answer this question, ask yourself: what is the value of data? While this very question is something modern policy makers have to examine with extreme care, investigating the value of data isn’t an exclusively modern phenomenon. We’re all familiar with the images of data and data analysis in the 90s, which typically drew on the caricatures brought forth by the dawn of the digital age: the Matrix being a prime example. In the present day, statistical data the statistical software to analyse it is available to everyone with access to the internet. From the algorithms that best match your dating profile with another to the way stores identify which items to put on sale - data is ubiquitous in our modern lives. Statistical analysis, however, has been around for centuries. Early statisticians made the most of the statistical methods they had at their disposal in order to collect, sort and register categorical and quantitative data. While the job of the statistician didn’t involve the inferential tools involved in Bayesian statistics, the basic principles have remained the same throughout the centuries: to collect, analyse and interpret data in order to make more informed decisions. While today we concern ourselves with concepts in methodology and analysis such as sample size, raw data, or effect size, the concept of collecting demographic and economic data throughout history has mostly been interested in investigating the movements of the economy, population and agriculture. While more robust versions of the historical evolution of statistics exists, the basics of statistics can be broken down into three basic phases. The first involved collecting census and observational data to improve sanitary and economic conditions. The second, implemented heavily after the Second World War, was registering demographic and economic data into government databases. The third, which extends to the present day, includes the revolutions in statistical inference brought about by technological advances. With live saving fields such as biostatistics, the improvement of data analysis methods has transformed the standards of living across the globe. Today, statistics has grown to become deeply interwoven with the field of data science. Statistical models have grown to include ones used in AI or machine learning, which often help draw inferences from non-numerical data. Tasks such as predicting an estimator or automatic randomization can be done much quicker in the present day thanks to the invention of statistical and analytical software. Some of the most common languages or programs you’re likely to encounter in statistics and data science field include R, Stata, SPSS, Python, C, and SQL.

Check for different data science courses on Superprof.

Descriptive Statistics Basics

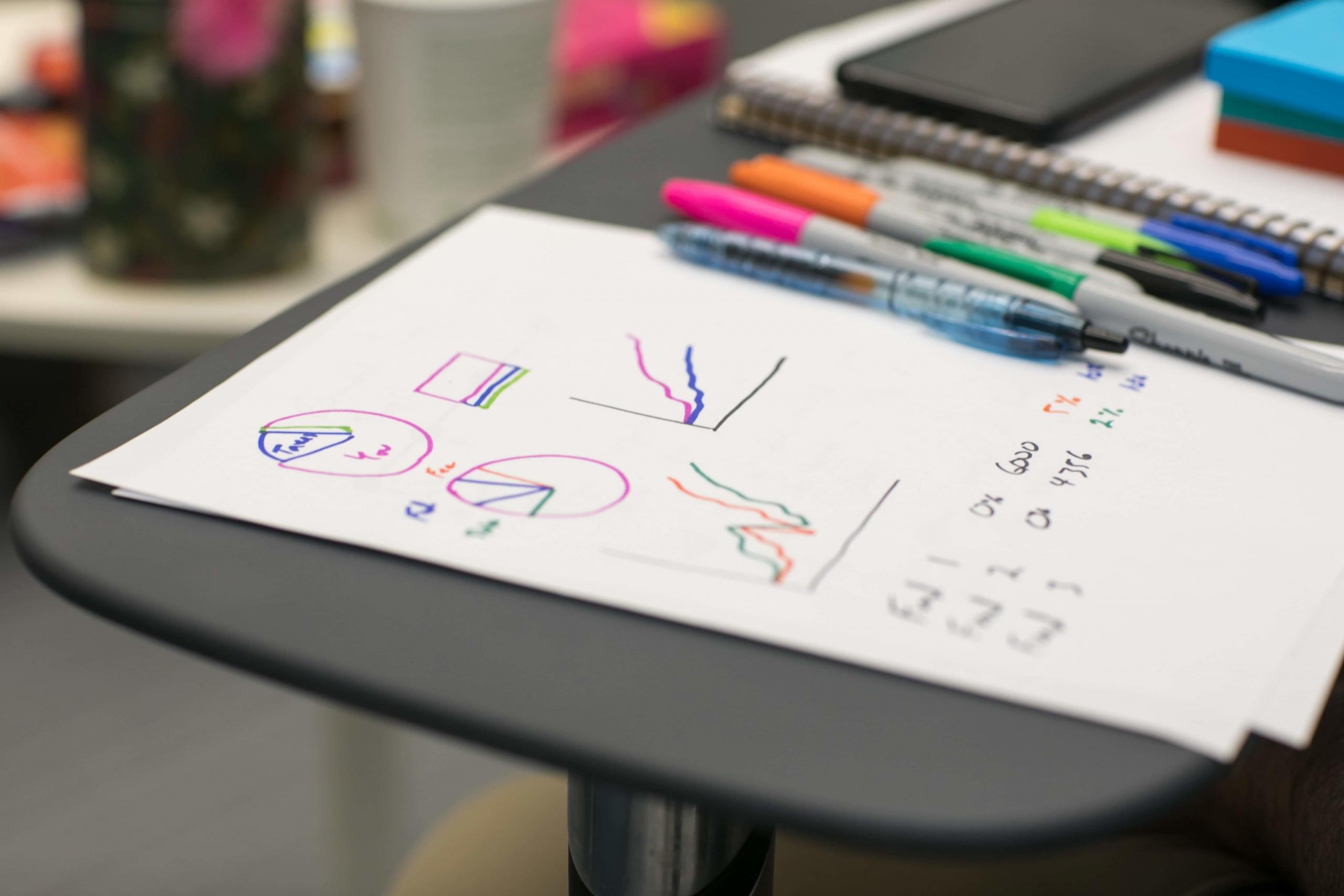

Whether you’ve built a histogram for a science project or regularly use data visualization tools at work, you’ve participated in one of the most important branches in the discipline of statistics: descriptive statistics. Split into two main branches, this first branch deals with data post data collection and strives to use statistical techniques to understand the composition of the data set. Often the first part of any study design, descriptive statistics reveal vital insights into the qualitative or quantitative data being considered. Whether the data is ordinal, categorical or numerative, there are two categories descriptive statistics can fall under: measures of central tendency or of variability. Measures of central tendency are used when someone wants to understand what the average looks like for one or more metrics. These measures involve aspects like the sample mean, median and mode. These three, while seemingly similar, are appropriate in different circumstances depending on whether or not the data has a large amount of outliers. Even the most experienced data scientists are not able to interpret anything about their data sets before conducting preliminary descriptive, statistical analyses. Measures of variability, on the other hand, include characteristics like standard deviation, covariance or the variances. These are used when someone would like to know the spread of the data, which tells you how far the data is spread around the centre, or average. This can be extremely helpful when understanding what percentage of your data falls under a certain range. When applied to financial statistics, the standard deviation can also be seen as the volatility of a particular data set. Descriptive statistics are mostly only for univariate analysis, which is the act of analysing one variable. While this acts as a way of understanding the makeup of things like income or sales, it can also be helpful when comparing the makeup of multiple variables. For example, if a small business wants to take advantage of the sales data it has for a particular event, they can use descriptive statistics to determine the percentage of its customers that are over or under a certain age. Descriptive statistics make up the vast majority of the statistics used by individuals, companies and governments. While forecasting future events is extremely important, many people only need measures of central tendency and variability to extract meaningful information for their decision making. Some of the most powerful measures and included in descriptive statistics are:

- Correlation coefficient

- Simple data visualization

- Distributions (binomial, normal, Laplace, etc.)

Inferential Statistics

The next branch of the disciplines combines probability and statistics in order to understand not only what is inside the data, but to use that data to make predictions. This type of statistical analysis, called inferential statistics, typically draws from probability theory and a probability distribution in order to conduct multivariate, or several variable, analysis. Also known as mathematical statistics, the statistical theory involved under this branch can also reveal important relationships within the data without the use of probability distributions with non-parametric models. The types of models used in the majority of inferential, statistical data analysis involve mostly parametric models such as general linear regression models or analysis of variance (ANOVA) tests. Regardless of whether it’s a parametric or non-parametric test, however, the mathematician or statistician will have to meet two criteria: have a set of variables they’d like to test and have their data meet certain assumptions. The first criterion is simple and involves a process we all understand, which involves picking one dependent variable or several in order to try to predict one independent variable or more. The second criterion is where most statisticians have trouble because most data sets do not strictly follow most assumptions required for using certain models, such as the data following a normal distribution. The Gauss-Markov assumptions for classical linear models are the most commonly known and are key to understanding inferential statistics. Inferential statistics is also distinct from descriptive statistics because it involves testing a null hypothesis against an alternative hypothesis. Using the models available, along with statistical software such as R or SPSS, you will be able to derive estimators and predictions on the mean along with their confidence intervals. If you’re just starting to learn about statistics, some of the most common parametric models include:

- General linear models

- Logistic regression models

On the other hand, some of the more common non-parametric models include:

- Cluster analysis

- Factor analysis

- Discriminate analysis

Along with these models, ANOVA is a common way in which statisticians determine which model can be more precise by comparing the variances of two or more models.

Tips and Resources for statistics

From understanding what statistical methodology to employ with categorical data analysis to comprehending how the concept of a random variable effects least squares and regression analysis - here are some statistics tips and resources to follow if you need any sort of statistics help.

Academic

Need help interpreting the statistical significance of your dependent variable or knowing which parametric test to employ on your observational data? Heading over to Stack Exchange, a statistics forum, will most likely give you the answer to your question. If you’re interested in getting tutored in Statistics, browse through Superprof’s community of almost 150,000 maths teachers in the UK. From chi-square tests to drawing inferences from data sets, a maths teacher can guide you through the field.

Programming

Stackoverflow is another great online forum that can help you with everything coding related, from including only certain outliers in your experimental design to running a regression analysis, they’ll help you troubleshoot your coding problems.

Summarise with AI: